FAQ: Apache Arrow Integration in mssql-python

In this Q&A, we explore the new Apache Arrow support in mssql-python, a significant enhancement for high-performance data pipelines. Learn how Arrow's zero-copy architecture reduces memory overhead and speeds up data fetching from SQL Server. This feature was contributed by community developer Felix Graßl and is now available for users working with Polars, Pandas, DuckDB, and other Arrow-native libraries.

What is the new feature in mssql-python?

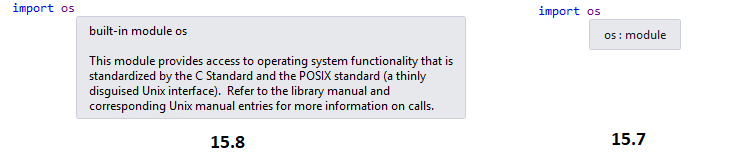

The mssql-python driver now supports fetching SQL Server data directly as Apache Arrow structures. Instead of converting each row into a Python object, the driver writes data directly into Arrow's columnar format at the C++ level. This eliminates the overhead of creating millions of Python objects and reduces garbage collector pressure. The result is a faster, more memory-efficient path for transferring data from SQL Server to DataFrame libraries like Polars or Pandas. The feature is built on Apache Arrow's C Data Interface, a cross-language ABI that allows zero-copy data exchange between different programming languages without serialization.

How does Apache Arrow achieve zero-copy interoperability?

Apache Arrow defines a stable, shared-memory layout called the Arrow C Data Interface. This is a binary-level contract (ABI) that specifies how compiled data is laid out in memory. Any language can produce or consume an Arrow array by simply passing a pointer to the memory region. There is no need for serialization, copying, or re-parsing. For instance, a C++ database driver can write data into an Arrow buffer, and a Python library can read from that same memory instantly. This enables direct data exchange between languages without the runtime overhead of converting between incompatible formats. The columnar storage also ensures that values of the same type are stored contiguously, making operations like filtering and aggregation efficient.

What problem does this solve for Python developers?

Before Arrow support, fetching a million rows from SQL Server into a Python DataFrame, such as Polars, required creating a million individual Python objects. Each object meant a separate memory allocation and garbage collection event, which consumed CPU and memory. The data then had to be converted again to build the DataFrame. With Arrow, the entire fetch loop runs in C++ and writes directly into columnar buffers. No Python objects are created during data transfer. The DataFrame library receives a pointer to that memory and can start processing immediately. This drastically reduces memory usage—a column of integers becomes a single contiguous C array—and speeds up data ingestion, especially for large datasets.

Which SQL data types see the biggest improvement?

Temporal types such as DATETIME and DATETIMEOFFSET benefit the most from Arrow support. In the traditional row-by-row fetch path, each value required a Python-side conversion, which added significant overhead. With Arrow, these values are converted directly into Arrow's date/time representations with no per-row Python involvement. The improvement is also noticeable for numeric types like integers and floats, as they avoid being wrapped in Python objects. The columnar format ensures that even complex types are stored efficiently using compact bitmaps for null values instead of per-cell None objects.

What are the concrete benefits for mssql-python users?

Users gain three key advantages: speed (faster data fetching due to reduced Python overhead), lower memory usage (no per-row Python objects, compact columnar storage), and seamless interoperability. Arrow data can be shared directly with Polars, Pandas (when using ArrowDtype), DuckDB, and even Hugging Face datasets without any intermediate copies. For example, a Polars pipeline reading from mssql-python never needs to materialize intermediate Python objects at any stage, enabling high-throughput data processing. The feature also opens the door to using Arrow-native operations like vectorized compute across multiple languages.

Can I use Arrow with other tools beyond Polars?

Yes, Arrow support is designed for broad compatibility. The Arrow C Data Interface is the standard that makes zero-copy exchange possible between any Arrow-native library. This includes Pandas (via the ArrowDtype extension), DuckDB, Hugging Face Datasets, and any other tool that understands Arrow's memory layout. Because the data is already in Arrow format, you can pass it to these libraries without conversion overhead. This flexibility makes mssql-python with Arrow an excellent choice for data engineering pipelines that mix languages or need to move data between Python, R, or C++ libraries seamlessly.

Who contributed this feature and how was it developed?

This feature was contributed by community developer Felix Graßl (GitHub: @ffelixg). He implemented the integration of Apache Arrow's C Data Interface into mssql-python, enabling zero-copy data transfer from SQL Server. The contribution was reviewed and merged by the project maintainers. Felix's work demonstrates how open-source communities can enhance database drivers with modern data access patterns. Users can now benefit from faster data fetching without changing their existing code—simply use the driver's standard query methods, and Arrow structures are returned where supported. The feature is part of a growing trend of bringing Arrow support to database drivers for improved performance and interoperability.