7 Key Facts About Apache Arrow Support in mssql-python

If you've ever fetched a million rows from SQL Server into a Polars DataFrame, you know the pain: a million Python objects, a million garbage-collector cycles, and then throwing all that away to build the actual DataFrame. That era is over. With the latest release of mssql-python, you can now retrieve SQL Server data directly as Apache Arrow structures—a faster, more memory-efficient path for anyone working with Polars, Pandas, DuckDB, or any other Arrow-native library. This feature was contributed by community developer Felix Graßl (@ffelixg), and we're excited to share what it means for your data pipelines.

- Arrow Basics: Zero-Copy, Columnar Memory

- Technical Foundations: API, ABI, and the Arrow C Data Interface

- Speed Boost: No Per-Row Python Objects

- Memory Efficiency: From a Million Objects to One Array

- Seamless Interoperability with Arrow-Native Tools

- Community Contribution: Felix Graßl's Work

- Under the Hood: How the Arrow Fetch Path Works

1. Arrow Basics: Zero-Copy, Columnar Memory

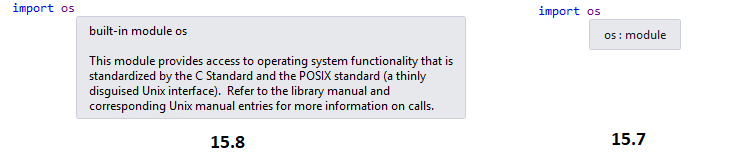

Apache Arrow's core innovation is zero-copy language interoperability. It defines a stable, shared-memory layout—the Arrow C Data Interface—that allows different programming languages to exchange data by simply passing a pointer. No serialization, no copies, no re-parsing. A C++ database driver and a Python DataFrame library can operate on the exact same memory without either knowing about the other's internals. On top of this, Arrow uses a columnar format: instead of representing a table as a list of rows (each row a collection of Python objects), Arrow stores all values for a column contiguously in a typed buffer. Nulls are tracked in a compact bitmap rather than per-cell None objects. This design is the foundation for high-throughput data processing, and now mssql-python leverages it directly.

2. Technical Foundations: API, ABI, and the Arrow C Data Interface

To understand the power of Arrow in mssql-python, you need three key terms:

- API (Application Programming Interface): A source-code contract that defines how to call a function or library. It's the high-level agreement between caller and callee.

- ABI (Application Binary Interface): A binary-level contract that specifies how compiled code is laid out in memory. Two programs built in different languages can share an ABI and exchange data directly—no serialization is needed.

- Arrow C Data Interface: Apache Arrow's ABI specification. It's the standard that makes zero-copy data exchange between languages possible. By implementing this interface, mssql-python can hand off data to any Arrow-native consumer without copying or conversion.

3. Speed Boost: No Per-Row Python Objects

Before Arrow support, fetching a million rows from SQL Server meant creating a million Python objects—one per row—and then throwing them away to build the DataFrame. That's a huge overhead. With the Arrow fetch path, the entire loop runs in C++ and writes values directly into Arrow buffers. For many SQL Server types, especially temporal ones like DATETIME and DATETIMEOFFSET, this eliminates Python-side per-value conversions entirely. The result is noticeably faster fetching for those column types, and a significant reduction in overall query-to-DataFrame latency. If you're working with large result sets, this speed boost can be dramatic.

4. Memory Efficiency: From a Million Objects to One Array

Memory usage is another area where Arrow shines. A column of one million integers in the old approach required a million individual Python integer objects, each with its own memory overhead. With Arrow, that same column is a single contiguous C array of integers—compact, cache-friendly, and efficient. Nulls are stored in a separate bitmap, taking just one bit per value instead of a full None object. This means lower memory consumption for the fetch itself, and because Arrow buffers are reused downstream, subsequent operations like filters and aggregations also benefit from reduced memory pressure. The garbage collector gets a break, too.

5. Seamless Interoperability with Arrow-Native Tools

One of the biggest advantages of Arrow is its ecosystem. Because mssql-python now exposes data via the Arrow C Data Interface, you can feed result sets directly into Polars, Pandas (using ArrowDtype), DuckDB, Hugging Face datasets, and any other library that understands Arrow. There's no need for intermediate conversions like to/from Pandas DataFrames or custom serialization. This makes mssql-python a first-class citizen in modern data science workflows, enabling pipelines that stay entirely within Arrow's zero-copy paradigm from database to analysis.

6. Community Contribution: Felix Graßl's Work

This feature wasn't built by a large team—it was contributed by community developer Felix Graßl (@ffelixg). His work demonstrates the power of open-source collaboration and the demand for better performance in SQL Server Python connectivity. We're thrilled to ship it and grateful for Felix's expertise. The Arrow integration is a perfect example of how a focused contribution can unlock big improvements for everyone. If you're a developer looking to get involved, this is a great time to dive into the mssql-python codebase and explore further enhancements.

7. Under the Hood: How the Arrow Fetch Path Works

Mechanically, the Arrow fetch path changes the entire data flow. The driver's C++ layer allocates Arrow buffers for each column and writes SQL Server data directly into them during the fetch loop. There's no Python object creation per row, and no garbage-collector pressure—the Python side simply receives a pointer to the shared memory. The DataFrame library (Polars, etc.) can then operate on that memory in-place, without any copying. Crucially, subsequent operations—filters, joins, aggregations—also work on the same buffers. A Polars pipeline reading from mssql-python never needs to materialize intermediate Python objects at any stage. That makes Arrow the right foundation for high-throughput, low-latency data pipelines.

Conclusion

Apache Arrow support in mssql-python marks a major step forward for anyone working with SQL Server data in modern Python data tools. Whether you're building a real-time analytics pipeline, loading data into a machine learning model, or just trying to speed up your daily ETL, this feature delivers measurable improvements in speed and memory efficiency. Thanks to Felix Graßl's contribution, the path from SQL Server to Arrow is now zero-copy and seamless. Try it out with your next query, and see the difference for yourself.