How to Engineer a Memory Chip That Defies Miniaturization Limits

Introduction

For decades, the semiconductor industry has faced a seemingly insurmountable barrier: as electronic components shrink, they waste more energy as heat, leading to overheating and shortened battery life. But a recent breakthrough has overturned this rule. Researchers have created a memory device that actually improves as it gets smaller—a feat once considered science fiction. This guide will walk you through the principles and steps to design a similar ultra-efficient memory unit that could revolutionize smartphones, wearables, and AI systems. Whether you're a chip designer, a materials scientist, or an engineering student, these steps distill the core innovations into a practical blueprint.

What You Need

Before diving into the design process, gather the following tools, materials, and knowledge:

- Materials: Advanced semiconductors (e.g., transition metal dichalcogenides or graphene), high-k dielectrics, metal contacts for electrodes, and a substrate (silicon or flexible polymer).

- Equipment: Atomic layer deposition (ALD) system for ultra-thin films, electron-beam lithography for nanoscale patterning, and a probe station for electrical characterization.

- Software: TCAD simulation tools (e.g., Silvaco or Sentaurus) for modeling charge transport and energy loss.

- Prerequisite Knowledge: Solid understanding of solid-state physics, particularly band theory, tunneling, and resistive switching mechanisms. Familiarity with memory architectures (e.g., NAND, NOR, RRAM) is helpful.

Step-by-Step Guide

Step 1: Rethink the Scaling Paradigm

Traditional miniaturization follows Dennard scaling, where voltage and dimensions shrink proportionally to maintain constant power density. However, below 10 nanometers, leakage currents and edge effects skyrocket. Your first task is to abandon this model. Instead, target a non-linear energy reduction by focusing on quantum confinement and interface engineering. Use TCAD simulations to identify regions where energy loss spikes at smaller nodes—this will guide your redesign.

Step 2: Select a Low-Dimensional Material

Conventional silicon struggles at sub-5nm scales due to surface roughness and dopant fluctuations. Choose a 2D material like molybdenum disulfide (MoS₂) or phosphorene. These materials have atomically uniform surfaces and exhibit ionic conduction when paired with certain dielectrics. For a memory cell, the active layer should be just 1–3 atomic layers thick to minimize bulk leakage. Deposit the material via ALD or mechanical exfoliation onto a clean substrate.

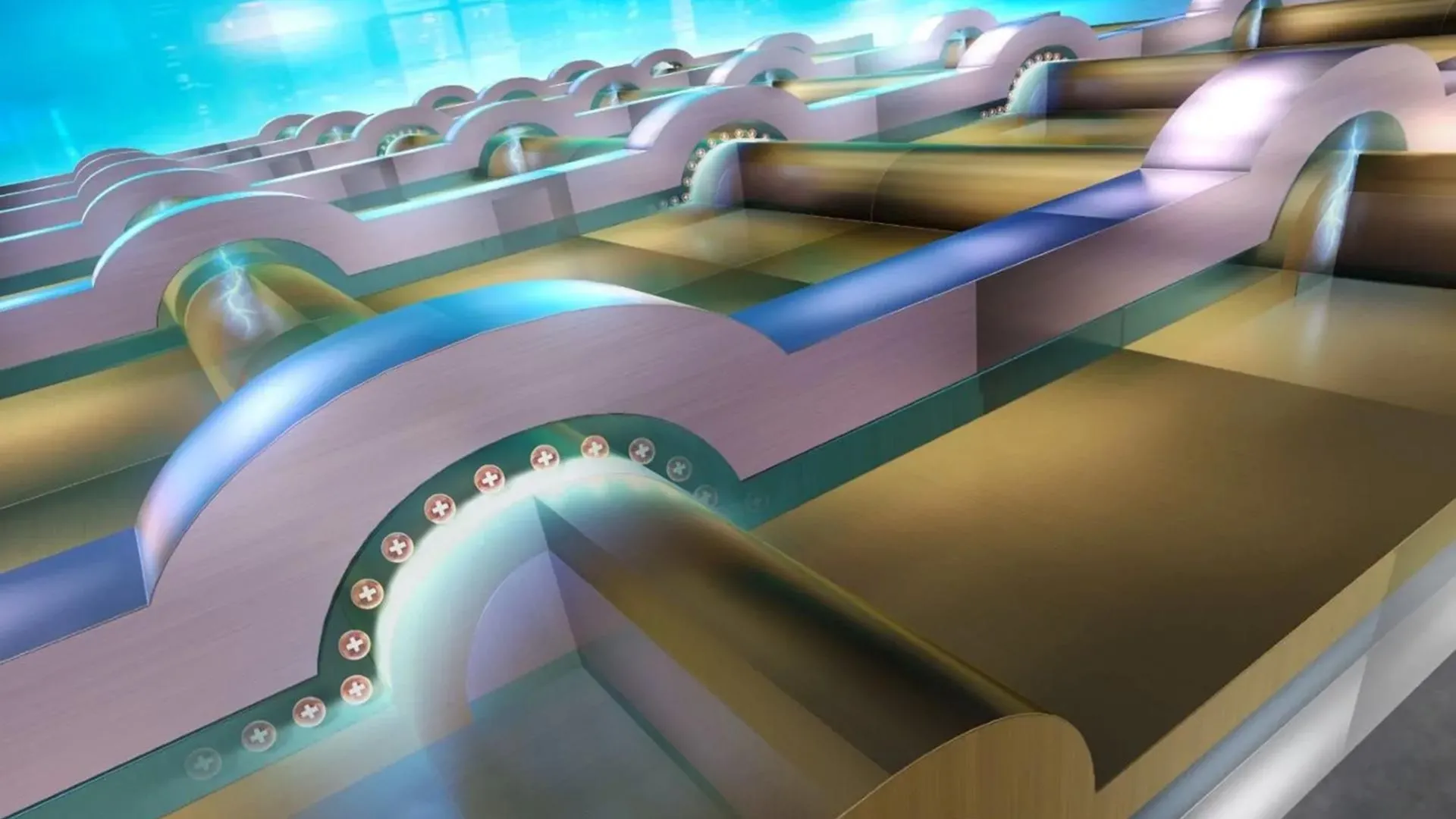

Step 3: Design a Three-Layer Vertical Stack

The memory device relies on a vertical structure: top electrode / switching layer / bottom electrode. But here’s the twist: the switching layer must be a graded dielectric (e.g., HfO₂ with an embedded oxygen-deficient zone). This creates a built-in potential that amplifies the resistive switching effect as the cell shrinks. Fabricate the bottom electrode (e.g., titanium nitride) using sputtering, then deposit the graded dielectric via ALD, varying the oxygen pulse ratio to create a gradient. Cap with a top electrode (gold or platinum) deposited by e-beam evaporation.

Step 4: Minimize Contact Resistance

At nanometer dimensions, contact resistance between metal and semiconductor can dominate power loss. To counteract this, use a van der Waals contact by transferring the 2D material directly onto the electrodes without covalent bonding. This reduces interface defects and Schottky barrier height. For measurement, employ a transmission line method (TLM) test structure to verify contact resistivity below 1 Ω·cm².

Step 5: Optimize Switching Energy

The heart of the improvement is that energy per bit drops as the cell scales down. To achieve this, you need a filament-free switching mechanism—rely on layer-dependent permittivity changes rather than conductive bridge formation. Apply a pulsed I-V sweep (e.g., ±1V, 10ns pulses) and measure the set/reset voltages. You should see that smaller devices require lower voltage and current due to enhanced local field effects. Adjust the dielectric thickness until the switching energy is below 0.1 pJ per bit at a 5nm node.

Step 6: Integrate Heat Dissipation Structures

Even with reduced energy loss, any remaining heat must be managed. Incorporate a graphene interlayer between the device and the substrate. Graphene's high thermal conductivity (5000 W/m·K) spreads heat laterally, preventing hot spots. This is critical for the 'improves as it shrinks' property—cooler operation allows denser packing. Use chemical vapor deposition (CVD) to grow graphene and transfer it onto the chip.

Step 7: Test Under Realistic Workloads

Lab-bench success doesn't guarantee real-world performance. Program your test chip with repeated read/write cycles (at least 10⁶ operations) at varying temperatures (0–85°C). Monitor for data retention (>10 years at 85°C) and endurance. The new cell should maintain a resistance ratio (OFF/ON) greater than 10³ even after scaling down. If the device degrades, revisit the graded dielectric recipe—oxygen vacancy mobility often needs fine-tuning.

Tips for Success

- Embrace non-idealities: The key insight is that at extreme scales, quantum effects like tunneling and interface dipoles can be harnessed rather than fought. Use simulation to find the sweet spot where these effects reduce power.

- Monitor process variability: Even a single atomic layer variation can change switching parameters. Use statistical process control (SPC) during ALD deposition to ensure layer count uniformity.

- Consider monolithic 3D integration: To fully exploit the energy benefits, stack multiple memory layers vertically. This reduces interconnect length and capacitance, further cutting power.

- Validate with cross-section TEM: After fabrication, confirm the graded dielectric profile with transmission electron microscopy (TEM) and energy-dispersive X-ray spectroscopy (EDS) mapping. The gradient should be smooth without abrupt junctions.

- Think beyond memory: This architecture can be adapted for in-memory computing, where logic operations occur directly inside the memory array—ideal for AI acceleration.